Just how important is compression in today’s ever-increasing technological world and what exactly is compression, be it lossless or lossy? Before we venture into how compression plays such an important role in machine vision, let’s first examine the need for both lossless and lossy compression in general.

Lossless and lossy video compression represent two different methods to reduce the file size of video data; both methods have distinct characteristics and purposes.

Let us begin with lossless video compression.

The ultimate goal of lossless compression is to reduce file size without sacrificing video quality. Essentially then, after lossless compression and decompression, the video is identical to the original.

Lossless compression operates by handling data more efficiently without discarding any information. It typically involves techniques like Arithmetic coding, Run-Length Encoding (RLE), Huffman coding, or other entropy encoding methods.

Lossless compression is commonly used in professional video editing, for archival purposes, or in any scenario where maintaining the highest quality is of paramount importance, including machine vision. This being said, the compression ratios achieved are generally lower in comparison to lossy compression.

Now, let’s discuss lossy compression.

The main aim of lossy compression is to achieve higher compression ratios by selectively discarding some information that is considered less essential to the end process. Lossy compression is typically used for streaming videos online, video sharing platforms, and other applications where file size is a critical factor. It allows for a significant reduction in file sizes, making it practical for storage and transmission, but at the expense of some quality loss. The more aggressive the compression, the greater the loss of quality.

Essentially, lossless compression protects the video’s original quality, with the tradeoff of larger file sizes. Lossy compression achieves higher compression ratios but is only suitable for scenarios where a certain level of quality loss is acceptable. The choice depends upon the specific requirements of your application or use case.

In the realm of machine vision, compression is essential for many reasons.

Smaller compressed data is processed quicker than larger uncompressed data. This is very useful in real-time machine vision applications where quick processing is essential. Properly designed and optimized compression techniques allow for faster data transfer, decoding, and subsequent analysis, contributing to improved system performance.

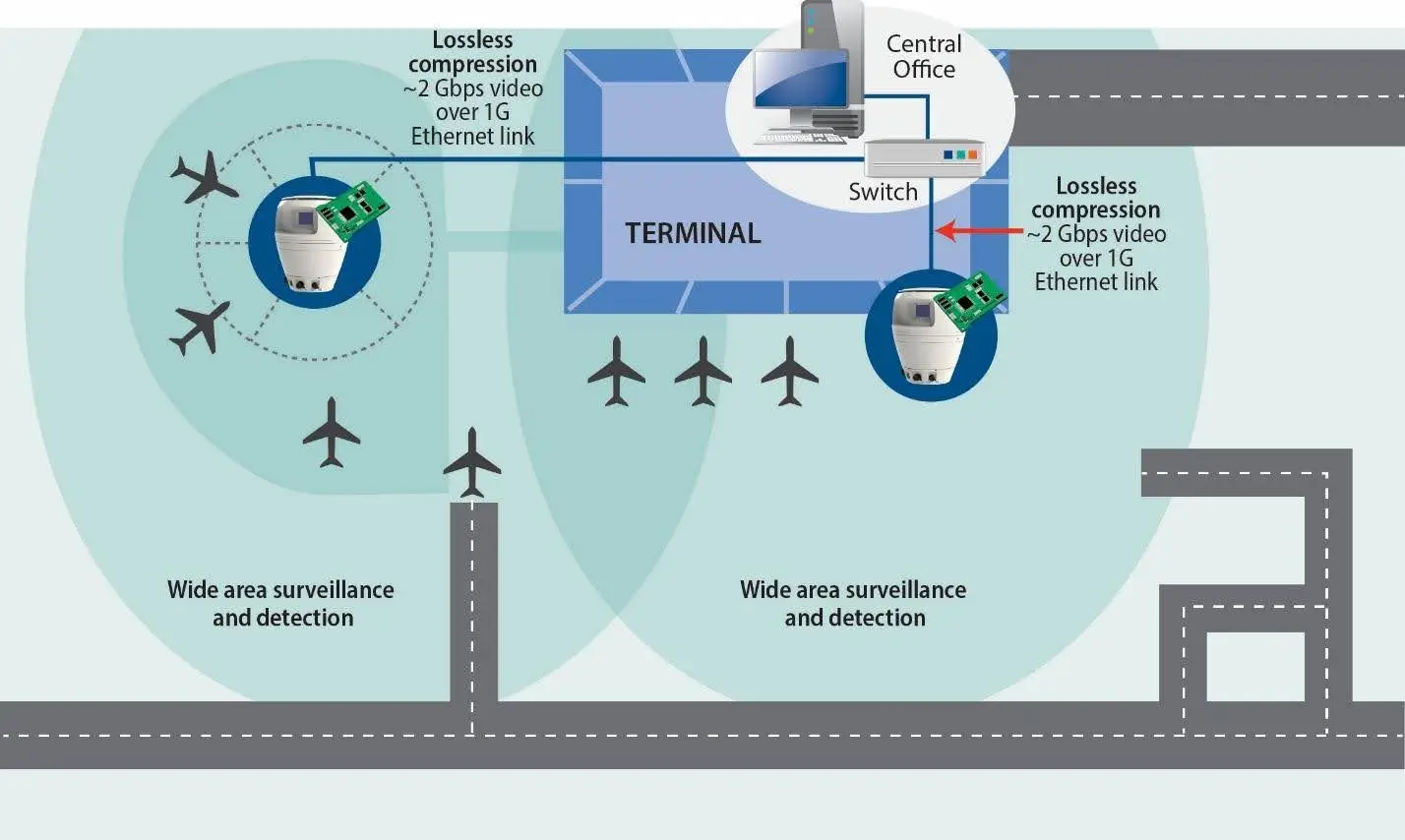

Typically, machine vision systems generate large amounts of data, especially when dealing with high-resolution images or video streams. Compression reduces the size of the data, making it more manageable and cost-effective to store. This storage efficiency, especially in those applications where large datasets are perpetually generated, such as in medical imaging, industrial automation and surveillance is crucial.

Compression assists in reducing the amount of transferrable data. This bandwidth reduction is critical to real-time applications where low latency is essential. Furthermore, this bandwidth reduction is critical to environments where bandwidth constraints may exist, such as embedded processing, robotics, or remote sensing.

As these applications become more complex, compression is a key technique that allows a designer to transmit more data over an existing architecture. In networked machine vision applications, compression assists in maintaining acceptable quality while reducing the data size. This improved image transmission quality guarantees that important details are preserved even in the most resource-constrained environments.

Additionally, machine vision technology is increasingly designed into embedded devices with limited computational resources. Compressing data permits these systems to conserve resources such as CPU power, memory, and energy consumption. This is particularly important in edge computing applications where devices operate with constrained resources.

Finally, and very importantly, there are cost savings to be had. Both storing and transmitting large amounts of data can be expensive in terms of both storage and bandwidth costs. Compression helps reduce these costs by decreasing the space required for data storage and minimizing the amount of data transmitted over networks.

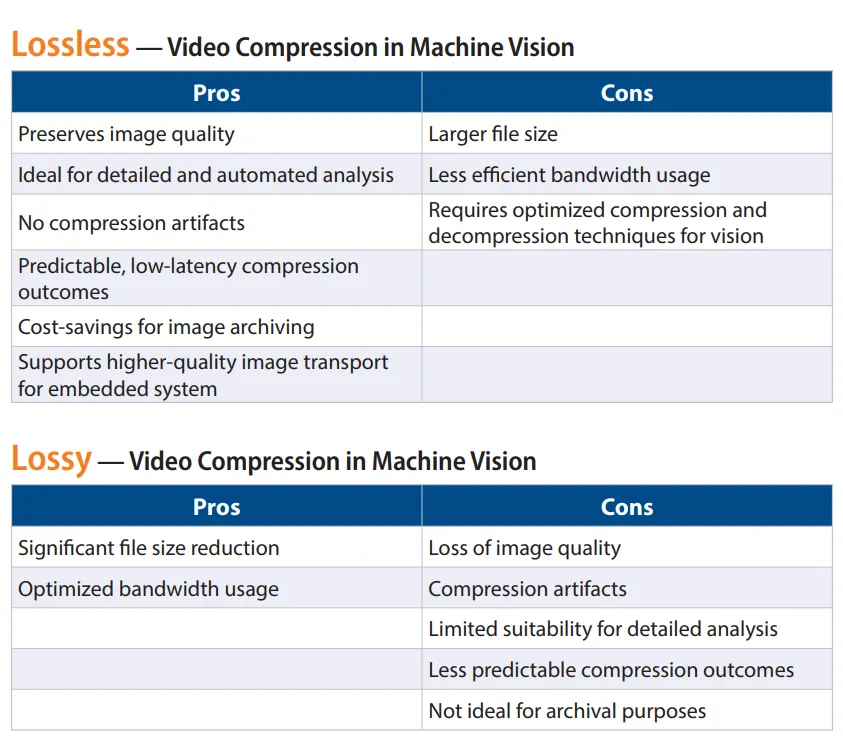

Lossless and lossy compression methods are the two most common compression techniques used in machine vision. Each has its own intrinsic qualities and capabilities and does trade-off compression ratios and image preservation quality.

The choice of compression method depends on the specific requirements of the given application, such as the importance of preserving fine details, the available bandwidth, and the acceptable level of data loss.